|

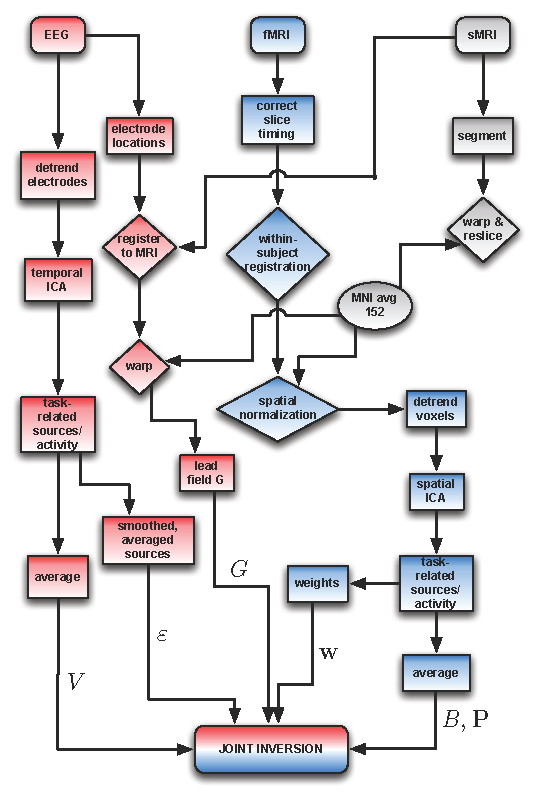

I’ve discovered that the quickest way to frustrate multiple students at one time is to give my abstract random side full rein in the classroom. If things will change, it is kind to give the child advance warning so they can be prepared. It will also be important not to talk in generalities but to be as literal and specific as possible, especially when you expect something from the child. The very best approach when working with CS children is to strive to be as consistent as possible, to be organized, to stick to routines, or at least allow the children to follow established routines, to display common sense, and to explain expectations and desired outcomes as clearly as possible. They might be viewed negatively at times due to being seen as perfectionists, inflexible, impatient, detail-oriented, and stubborn. (ii) The same neural dynamics with upper panels projected into two-dimensional space.The Concrete Sequential Learning Style The Concrete Sequential Learning StyleĬoncrete Sequential learners have gifts of great organization, attention to detail, a tendency to always complete tasks, high productivity, and reliable dependability. (i) Five time series of the overlap with target 9 from five random initial points, which is the latest trained pattern. (e),(f) Recall performance in (e) and Lyapunov dimension and P C 80 in (f) are plotted. Black solid and dashed lines indicate overlap with input and random patterns, respectively, for reference. (d) Maximum overlaps of the spontaneous activity with targets are plotted for different ε. Dots in blue and pink are for ε = 0.03 and ε = 1.0, respectively. Dots in blue and orange are for T = M and T = 30 M, respectively. (b) Scatter plot of maximum overlap of the spontaneous activity with a target against its recall performance. In the right panel, probability density functions (p.d.f.) of these overlaps in longer intervals ( 0 < t < 1000) and their standard deviations are also plotted as bars. Here, N = 300 and M = 90 as well as in the following panels. (a) Overlaps of spontaneous activities without input m 45 are plotted from t = 500 to 700 for T = 2 M, 5 M and T = 20 M in blue, orange, and green, respectively, in the left panel. (f) Σ μ ( s κ, μ ) 2 1 ≤ κ ≤ M for s = a, b, c, d are plotted during the learning process in the modified pseudoinverse model corresponding to Fig. These values as a function of α are shown in (d), while those as a function of N are shown in (e). (d),(e) SD of ξ μ J ξ ν / N is plotted for T = M in blue and for T = 30 M in magenta. Different points indicate different networks. (c) Standard deviation (SD) of nondiagonal elements of S during the learning process. Black line represents the performance for the original matrix for reference. Green line indicates the performance for this matrix, while the red one indicates the performance for the matrix which is rescaled so that ∑ j ≠ i J i j 2 = 1. (b) Recall performance for the matrix consisting of only M dominant singular vectors. We plot points for randomly selected 60 pairs of ( μ, ν ). In (iii), a scatter plot between a κ, μ and c κ, ν ( μ ≠ ν) for the same parameters as left. In (ii), a scatter plot between a κ, μ and c κ, μ for the same parameters as (iii). (a) In (i), a scatter plot between a κ, μ and b κ, μ for 1 ≤ κ, μ ≤ M and M = 60, T = 30 M. Distance from targets was 0, 0.1, or 0.5.

Five trajectories were initialized either from the vicinity of the target (gray, | x − ξ | < 0.001) or from randomly chosen states (black). (d) Stability of modified pseudoinverse attractors. (c) The number of learning steps required for the modified pseudoinverse model to learn α N maps is plotted. Gray line is the performance in our model for reference.

These two conditions are overlapping for Hopfield (blue) and Perceptron (green). Filled and empty circles represent initial conditions near and far from the target, respectively. Magenta, blue, and green circles indicate performance in the modified pseudoinverse model, Hopfield-type network, and Perceptron model, respectively.

(b) Recall performance as a function of memory load for different models. (a) Overlaps with targets are illustrated for M = 40, 60, 80, 100, 120 for the Perceptron model, same as that in Fig. Comparison of memory performance with other models.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed